Identification: What is it?

- Iavor Bojinov

- Feb 17, 2020

- 3 min read

Updated: Feb 26, 2020

Identification and identifiable are two extremely commonly used words in Statistics and Economics. But, have you ever wondered, what does it really mean to say that a quantity is identifiable from the data? Statisticians seem to agree on a definition in the context of parametric models --- saying a parameter is identifiable if each parameter corresponds to a distinct parametric model --- but beyond that things get a little tricky. Unfortunately, this is a place where looking at the existing academic literature leaves us with more questions than answers: Are parameters the only quantities that can be identified? Is the concept of identification meaningful outside of parametric statistics? Does it even require the notion of a statistical model? Many authors have tried to answer these questions, but have tended to provide either partial or idiosyncratic answers in specific contexts.

In a recent paper, Guillaume Basse and I propose a unifying theory of identification that incorporates existing definitions for parametric and nonparametric models and formalizes the process of identification analysis. In this blog post, I am going to provide a brief and (hopefully) easy to understand description of our general theory. I will leave the identification analysis for another blog post, but if you are interested then just read the paper!

Contributions

We make two main contributions:

We provide a single general and mathematically rigorous definition of identifiability. Abstracting away the specifics of each domain allows us to recognize the commonalities and make the concepts more transparent as well as easier to extend to new settings.

We use our definition to develop a set of results and a systematic approach for determining whether a quantity is identifiable and, if not, what is its identification region (i.e., the set of values of the quantity that are coherent with the data and assumptions).

Statistical inference teaches us ``how'' to learn from data, whereas identification analysis explains ``what'' we can learn from it. Although ``what'' logically precedes ``how,'' the concept of identification has received relatively less attention in the statistics community.

A general theory of identification

Set up

Our framework consists of three elements:

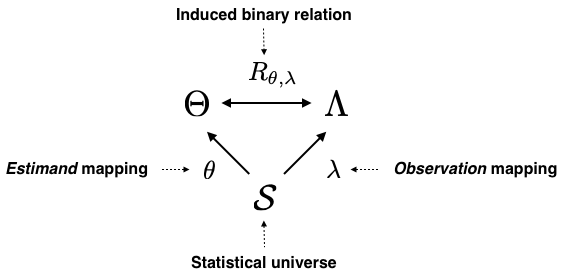

A statistical universe that contains all of the objects relevant to a given problem (S).

An estimand mapping that describes what aspect of the statistical universe we are trying to learn about (𝛉: S↦𝚹)

An observation mapping that tells us what parts of our statistical universe we observe (𝝀: S↦𝚲)

We then define identification by studying the inherent relationship between the estimand mapping and the observation mapping using the induced binary relation R. Intuitively, the induced binary relation connects ``what we know'' to ``what we are trying to learn'' through the ``statistical universe'' in which we operate.

Example: In parametric models where P(ϑ) is a distribution indexed by ϑ∈𝚹,

The statistical universe is S = {(P(ϑ),ϑ) ϑ∈𝚹}

The estimand mapping is 𝛉(S) = ϑ

The observation mapping is 𝝀(S) = P(ϑ).

The induced binary relation then maps R:ϑ ↦P(ϑ).

Definition of Identification

We say that 𝛉 is identifiable from 𝝀 if the induced binary relation R is injective. That is, if there is a 1-1 relationship between what we are trying to estimate and what we observe, then the estimand mapping is identifiable from the observation mapping. Of course, there is a bunch of maths to make this definition exact, but this is the essence of it.

Results

Our main results show that our general formulation allows us to work directly with both parametric and nonparametric models, without having to introduce separate definitions. What's more, our setup allows us to cleanly define identification in finite population settings without having to revert to using a nonparametric sampling argument.